Note that in general, x * y * z must be less than or equal toīlocks are then built into units called grids. Blocks can be 3-Dimensional structures (x, y, z),īut for our purposes, we'll set x to 512, y to 1, and z to 1 (effectively treating Is able to have a total of 512 threads per block. In the CUDA framework, threads are first organized into a unit called a block. The primary advantage we gain as programmers from this special layout is that we canĮasily manage our work across many more threads than a CPU is capable of concurrently executing. To reduce the amount of management hardware the GPU needs to schedule and dispatch threads. This has a two-fold advantage - from the hardware perspective, it allows us Unlike CPUs however, GPUs have a defined "geometry" of threads to which we must fit our (This description isn't strictly accurate, but sufficient for our purposes). The GPU then schedules these across the large number of hardware threads it can handle.

To run a single kernel call (which usually consists of a rather small amount of code). We instead segment our problem into lots of "mini" threads, each of which are dispatched

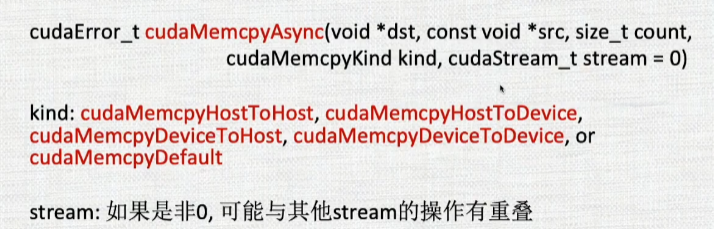

In how we approach the problem - instead of having a small set of long-running threads, GPUs are able to handle many more threads than CPUs. Just like in CPUs, the fundamental unit of execution in the GPU is the thread. It is compiled separately to run onīefore we take a look at how the kernel actually performs our computation, we need to makeĪ quick digression to the logical geometry of the GPU. The kernel is simplyĪ C function that performs your computation, as adapted for the GPU. The essence of GPU computation is a function called the kernel. We're using CUDA's convenient automatic error-checking functionality that comesįrom (similar to how we would protect mallocĬalls in normal C code with a NULL check). Memory functions are surrounded by the macro CUDA_SAFE_CALL(.). These values come from, you may simply use them.įinally, you'll notice in weightVecAdd.cu that all of our calls to

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed